AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Ibm watson speech to text narrowband11/29/2023

However, advancements in this can already be seen as mobile phone manufacturers and operators continue implementing VoLTE or Wideband audio (also known as “HD Voice”) technology. In a contact center context, accuracy is further complicated with background noises and even low-quality calls, due to the narrowband phone calls. Word Error Rate = (Substitutions + Deletions + Insertions) / Number of Words Spoken) (And if you are interested the standard for accuracy works as following: As Andrew Ng, former head of science in Baidu and one of the most recognized experts in speech-recognition put it already in 2016 “As speech-recognition accuracy goes from 95% to 99%, we’ll go from barely using it to using all the time!” The higher the accuracy rate becomes, the more possibilities it opens. While we are talking about small differences in percentages, the impacts are rather large. This is however still lower in less spoken languages. The best voice recognition software can reportedly achieve as high as 97% accuracy in English (-us), while Google, Microsoft, and IBM Watson are all disclosing numbers around 95% (2018 assuming these numbers are higher today). While some use cases already work with the current error rates, to gain deeper analysis capabilities, voice-recognition needs to get even better in terms of accuracy. As speech is far from monotone and languages vary across regions, accents, dialects, and grammar rules, perfecting the algorithms takes time and data. The single biggest challenge is still enhancing the accuracy of transcriptions. The biggest challenge for speech-recognition? – Perfecting accuracy

What this also means is that we already have the capabilities to start working on larger Speech-to-text projects with relatively fast deployment.

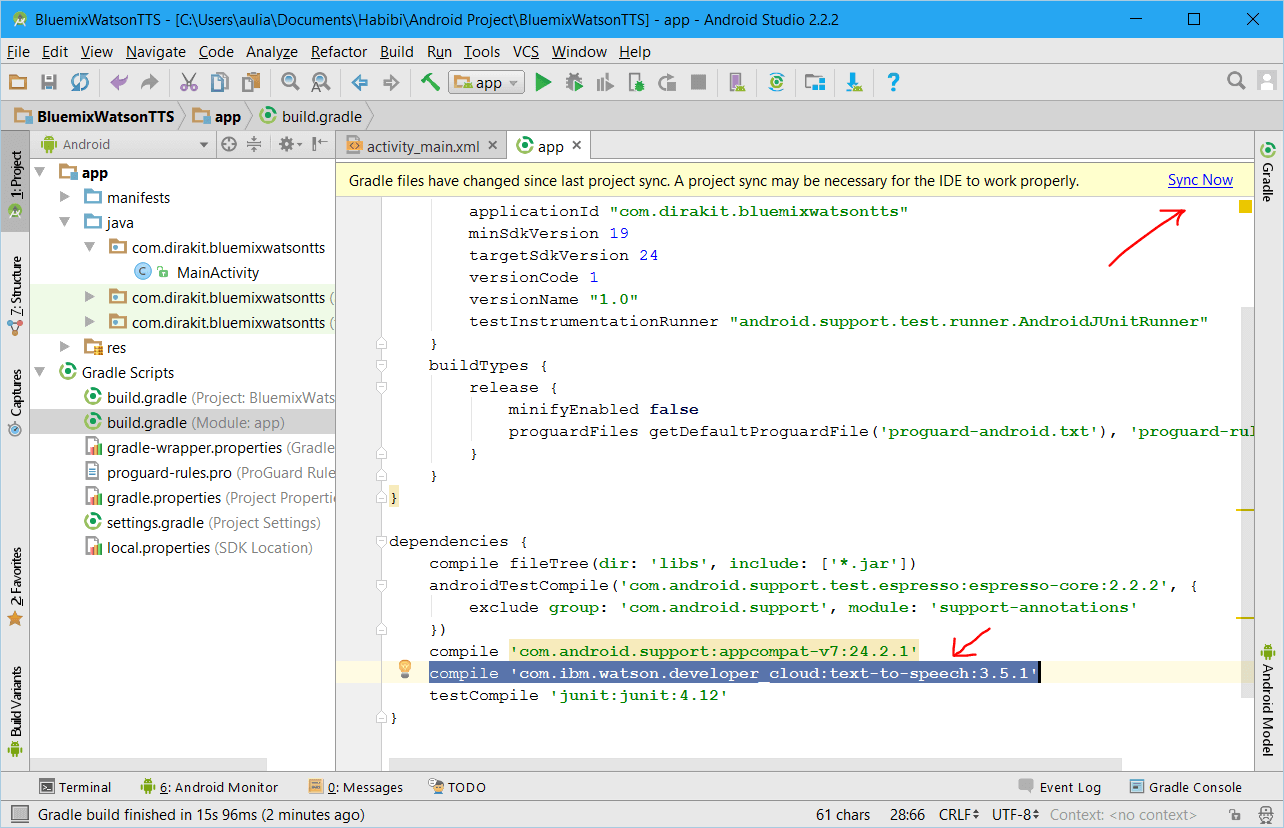

This means that customers can already use software from several vendors and hook it up to LeadDesk quite easily. For the Nordic region, companies like Inscripta (Finland), Gamgi (Sweden), and Capturi (Denmark) are working towards enhancing local language recognition.Īt LeadDesk, we have followed the development of Speech-to-text for a longer time already and created compatible endpoints with the most used services. Furthermore, while the bigger players are mostly focusing on perfecting the accuracy of larger languages, English being at the forefront, there are several smaller local companies crafting voice recognition for their markets, as also seen in this year’s CCW in Berlin. While the industry is, as discussed, still some way from getting the full benefits of the technology, big players such as Microsoft Azure, Google, IBM Watson, Amazon and Baidu (in China) invest billions in voice recognition software and deep learning. More on the different opportunities later on in this blogpost. However, we are on the right track and the more simple solutions (which can manage with higher error rates) can already be implemented with various languages. Of course, the technology is still some way from being able to utilize this technology fully. Consider the possible implications from the following perspectives: QA, business development, and analysis. The question isn’t about getting data, but rather how to leverage that into business opportunities. For a single person, this 1-day worth of material would already take over a month to process even with an accelerated 1.2x speed. If we calculate an average of 8-hour shifts with an average talk time of 45 min/hour, the amount of material collected per day averages at 300 hours. The bigger the operations get, the more recordings are stored, thus making it impossible to process without machine help.Īs a simple visualization, consider a Contact Center with 25 seats working in 2 shifts. Why should Contact Centers care?Īs contact centers live and breathe voice, it hardly surprises anyone that they have tons of data available. If you want to jump even deeper into the technical stuff, check out this post from Adam Geitgey. at a rate of 16khz, or 16000 samples per second ) and with complex algorithms combine and match the samples to local language pronunciation and start forming words.

Voice recognition works by breaking down spoken words to smaller samples (e.g. It’s the same technology that drives the tech space’s voice-enabled assistants, such as Siri, Alexa, or Google Assistant in the background. Speech-to-text software (also referred to as voice/speech recognition software) transcribes audio files into text, usually in a word processor to enable editing and search. This means jumping on both omni-channel and leveraging their data even further. Contact centers need to adapt by both delivering services in the channels where the customers are and embrace technology to provide even better service and improve agent experience. Buying habits and the way we interact with technology is changing.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed